Evaluation Metrics enable supervisors to define and monitor performance indicators for assessing the quality of agent–customer interactions. These metrics use AI-driven or rule-based analysis to evaluate conversations across different dimensions.Documentation Index

Fetch the complete documentation index at: https://koreai.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

Key Benefits

| Benefit | Description |

|---|---|

| AI-powered intelligence | Uses GenAI for contextual evaluation with minimal training data. |

| Comprehensive coverage | Provides multiple measurement types to address diverse evaluation scenarios. |

| Automated QA | Reduces manual review workload through intelligent analysis. |

| Flexible configuration | Supports both static and dynamic evaluation options. |

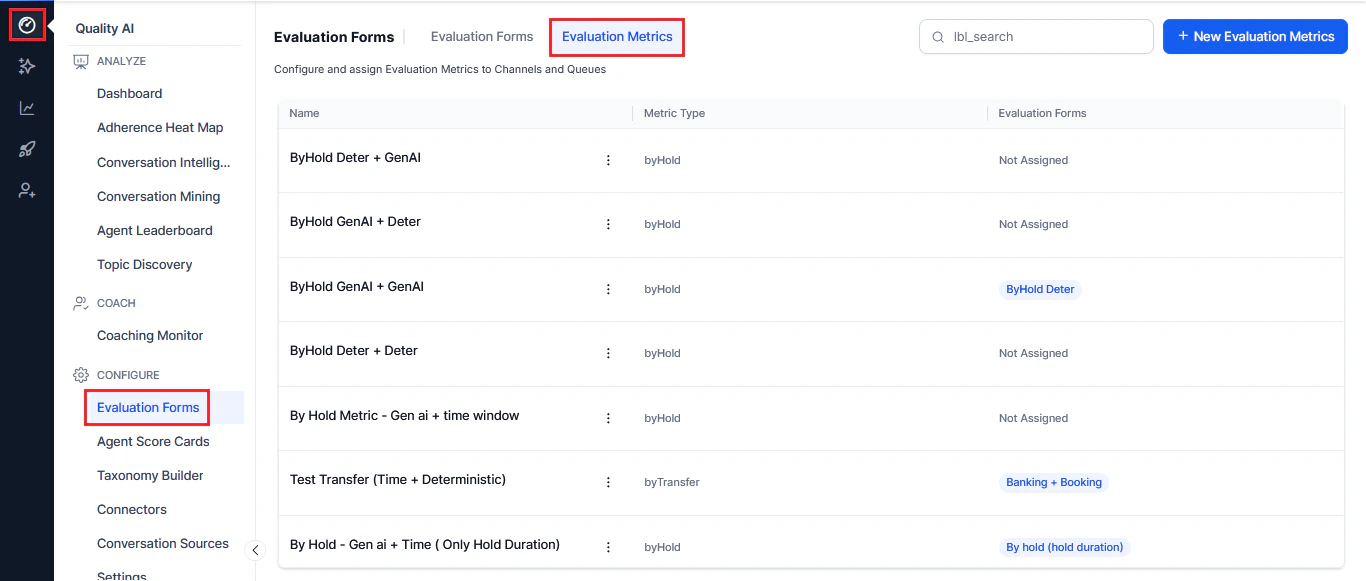

Access Evaluation Metrics

Navigate to Quality AI > Configure > Evaluation Forms > Evaluation Metrics.

| Column | Description |

|---|---|

| Name | Metric name. |

| Metric Type | Measurement type. |

| Evaluation Forms | Associated evaluation forms (for example, By Speech, By Question). |

| Ellipsis icon | Edit and delete options. |

| Search | Quick search to find metrics. |

| New Evaluation Metrics | Option to create new metrics. |

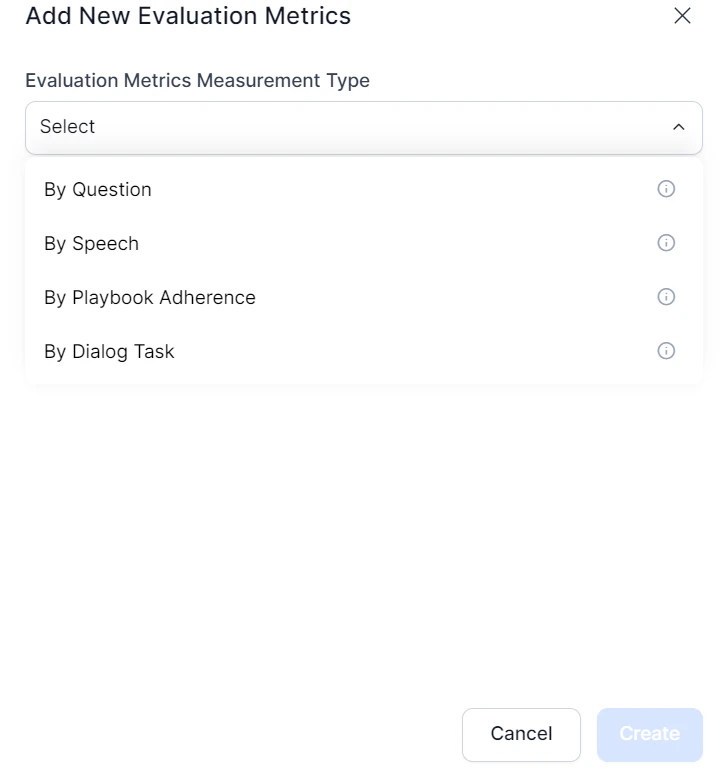

Create a New Evaluation Metric

- Select the Evaluation Metrics tab.

- Select + New Evaluation Metrics.

- Choose an Evaluation Metrics Measurement Type.

Measurement Types

By Question

Evaluates adherence to specific questions asked or answered during interactions. Key features:- Static Adherence-applies to all conversations

- Dynamic Adherence-conditional evaluation triggered by specific events

- GenAI Detection-contextual understanding with no training samples required

- Deterministic Detection-semantic matching against predefined patterns

- Flexible thresholds-set different similarity scores per use case

By Speech

Analyzes speech characteristics during voice interactions. Key features- Crosstalk-detects overlapping speech with configurable thresholds

- Dead Air-monitors silence periods (configurable duration)

- Speaking Rate-tracks Words Per Minute (WPM)

By Value

Verifies customer-specific information shared by an agent against trusted data sources. Key features:- API integration-real-time verification with CRM and external systems

- Business rules engine-five rule types (first/last value, negotiated, strict matching, custom)

- Compliance tracking-detects deviations from expected values

- Audit trails-logs validation results for supervisory review

By Dialog Task

Assesses completion and quality of specific tasks or workflows within a conversation. Key features:- Dialog agent selection-choose which dialog agent to evaluate

- Evaluation scope-entire conversation or time-bound segment

- Time parameters-configurable in seconds (voice) or message count (chat)

By Playbook Adherence

Measures how well interactions follow predefined playbooks or procedures. Key features:- Entire Playbook-assesses adherence across all playbook components

- Specific Steps-targets evaluation at specific stages or steps

- Percentage thresholds-define minimum adherence levels required

By AI Agent

Uses AI agents for sophisticated, multistep evaluations with autonomous decision-making. Key features:- Complex analysis: Multi-step reasoning across conversation elements

- Domain expertise: Supports specialized evaluation contexts (compliance, technical support)

- Contextual understanding: Nuanced evaluation requiring full conversation context

- Advanced decision-making: Goes beyond pattern matching for judgment calls

By Manual Evaluation

Manual Evaluation metrics enable QA teams to assess agent performance through human-led reviews, especially in scenarios where automated detection is less reliable. QA managers configure these metrics in the form, assigning a weight only in points. Key features:- Human-Driven Assessment-metrics are evaluated exclusively by QA auditors without Auto QA involvement.

- Points-Based Only-available only within points-based evaluation forms to ensure accurate scoring allocation.

- No AI Dependency-independent of GenAI, deterministic detection, triggers, and adherence thresholds.

- Clear Visual Identification-displays distinctly across Audit screens, Conversation Mining, Heatmaps, and Reports with the suffix (Manual Evaluation Metric).

By Hold

Evaluates how effectively agents manage customer hold scenarios during voice interactions, ensuring proper communication, timing, and resumption behavior. Key Features:- Static Adherence-applies consistently to all conversations with hold events

- Event-driven Evaluation-triggers automatically when hold events occur via telephony integration

- Multi-instance Detection-evaluates multiple hold events within a single interaction

- GenAI Detection-contextual, flexible evaluation using LLM-based understanding

- Deterministic Detection-embedding-based semantic matching against predefined utterances

- Configurable Sub-criteria-assess hold notification, duration compliance, and call resumption

- Flexible Thresholds-defines similarity scores, hold duration limits, and evaluation windows

- Weighted Scoring-assigns percentage-based contributions to each sub-criterion

Edit or Delete Evaluation Metrics

- Search the required metric to update.

- Select the ellipsis (⋮) menu.

- Choose Edit to modify or Delete to remove the metrics.

- Select Update to save changes.